Fifth edition

Dana Lee Ling

Dana Lee Ling

College of Micronesia-FSM

Pohnpei, Federated States of Micronesia

QA276

2012 College of Micronesia-FSM.

This work is licensed under the Creative Commons Attribution 3.0 License. To view a copy of this license, visit

http://creativecommons.org/licenses/by/3.0/ or send a letter to Creative Commons, 171 Second Street, Suite 300, San Francisco, California, 94105, USA.

2012 College of Micronesia-FSM.

This work is licensed under the Creative Commons Attribution 3.0 License. To view a copy of this license, visit

http://creativecommons.org/licenses/by/3.0/ or send a letter to Creative Commons, 171 Second Street, Suite 300, San Francisco, California, 94105, USA.

Printed in the Federated States of Micronesia

Introduction to Statistics

Using LibreOffice.org Calc

Preface

Chapters

Software notes

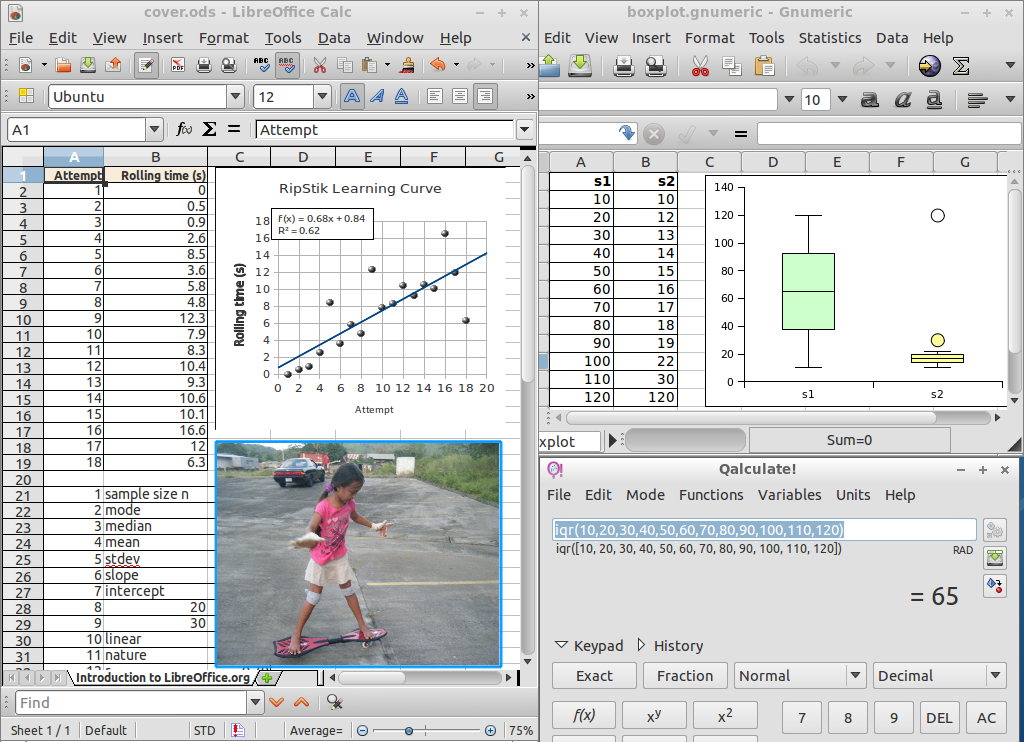

This statistics text utilizes LibreOffice.org Calc to make statistical calculations. This text also includes references to Microsoft Excel functions where they differ from LibreOffice.org Calc functions. The text also uses Gnome Gnumeric to generate box plots.

The text does not use any add-ins, add-ons, statistical extensions, or separate dedicated proprietary statistical packages. This choice is very deliberate. Course alumni and readers of this text are most likely to encounter default installations of spreadsheet software without such additional software. Course alumni should not feel that they cannot "do" statistics because they lack a special add-in or dedicated package function that may require administrative privileges to install. Given an "out-of-the-box" installation of a spreadsheet, course alumni, or for that matter any reader of this text, should be able to generate and use the statistics introduced by this text.

This text utilizes HyperText Markup Language, Scalable Vector Graphics, and Mathematics Markup Language (HTML+SVG+MathML). A browser such as FireFox 4 or higher is required to properly display and print this text.

We all walk in an almost invisible sea of data. I walked into a school fair and noticed a jump rope contest. The number of jumps for each jumper until they fouled out was being recorded on the wall. Numbers. With a mode, median, mean, and standard deviation. Then I noticed that faster jumpers attained higher jump counts than slower jumpers. I saw that I could begin to predict jump counts based on the starting rhythm of the jumper. I used my stopwatch to record the time and total jump count. I later find that a linear correlation does exist, and I am able to show by a t-test that the faster jumpers have statistically significantly higher jump counts. I later incorporated this data in the fall 2007 final.

I walked into a store back in 2003 and noticed that Yamasa soy sauce appeared to cost more than Kikkoman soy sauce. I recorded prices and volumes, working out the cost per milliliter. I eventually showed that the mean price per milliliter for Yamasa is higher than Kikkoman. I also ran a survey of students and determined that students prefer Kikkoman to Yamasa.

My son likes articulated mining dump trucks. I find pictures of Terex dump trucks on the Internet. I write to Terex in Scotland and ask them about how the prices vary for the dump trucks, explaining that I teach statistics. "Funny you should ask," a Terex sales representative replied in writing. "The dump trucks are basically priced by a linear relationship between horsepower and price." The representative included a complete list of horsepower and price.

One term I learned that a new Cascading Style Sheets level 3 color specification for hue, luminosity, and luminance was available for HyperText Markup Language web pages. The hue was based on a color wheel with cyan at the 180° middle of the wheel. I knew that Newton had put green in the middle of the red-orange-yellow-green-blue-indigo-violet rainbow, but green is at 120° on a hue color wheel. And there is no cyan in Newton's rainbow. Could the middle of the rainbow actually be at 180° cyan, or was Newton correct to say the middle of the rainbow is at 120° green? I used a hue analysis tool to analyze the image of an actual rainbow taken by a digital camera here on Pohnpei. This allowed an analysis of the true center of the rainbow.

While researching sakau consumption in markets here on Pohnpei I found differences in means between markets, and I found a variation with distance from Kolonia. I asked some of the markets to share their cup tally sheets with me, and a number of them obliged. The data proved interesting.

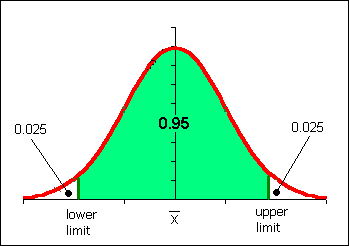

The point is that data is all around us all the time. You might not go into statistics professionally, yet you will always live in a world filled with numbers and data. For one sixteen week term period in your life I want you to walk with an awareness of the data around you. At midterm you will turn in a proposed ratio level data set with basic statistics. You pick the data - you decide on the sample. At term's end you will add a 95% confidence interval for your data set and turn in a final, completed project.

Numbers flow all around you. A sea of a data pours past your senses daily. The world is numbers. Watch for numbers to happen around you. See the matrix. When you observe numbers happening, record them.

Find something original, something unique to your life. Avoid doing a project on an example used in class such as favorite color, car counts, step counts, or other in-class examples.

Four separate mini-projects are turned in. The first on the day of test one, the second on the day of test two, the third on the day of test three, the fourth is due on the last Friday of class. The data for the mini-projects is based on a theme. Each mini-project will include a written description of the sample and sampling procedure, the data, and the basic statistics as specified for that project. Project should be done in word processing software with tables and graphs pasted in from a spreadsheet. If you are using Google Docs, work with the instructor on limitations peculiar to Docs.

| points | statistics |

|---|---|

| 2 | sample data, sampling procedure description: what was measured, when, how |

| 4 | basics: n, min, max, range |

| 4 | middle: midrange, mode, median, mean |

| 2 | spread: standard deviation, coefficient of variation |

| 5 | frequency table |

| 3 | histogram chart |

| 20 |

| [d] Data [max 4] | |

|---|---|

| 1 | sampling procedure description: what was measured, when, how |

| +2 | xy paired data in two columns |

| +1 | ≥ five data pairs |

| [t] Data table format [max 3] | |

| 3 | Clear, concise, well thought out, informative, labels and units in the head, borders |

| 2 | Missing borders or other minor format inconsistencies, or missing units in the head |

| 1 | Incomplete, runs off edges of page, lacks minimal margins, or missing two or more elements such as borders and units |

| [g] Data display: Graph [max 3] | |

| 3 | Correct graph type, correct axis labels |

| 2 | Missing a label or other element, or reversed x and y axes on an xy scattergraph |

| 1 | wrong graph type, or plotting the wrong data, or wrong result on graph, runs off page, or otherwise incorrect |

| [a] Analysis [max 4] | |

| +1 | slope |

| +1 | intercept |

| +1 | correlation r |

| +1 | corefficient of determination |

| [q] Quality [max 5] | |

| 5 | Excellent: original, interesting, shows strong effort and creativity, data took time and effort to acquire, project shows planning and forethought well laid out and presented. |

| 4 | Good: original, interesting, shows some effort and planning, data may have been easily acquired but is well presented. |

| 3 | Fair: acceptable level of effort, reasonable data for investigation |

| 2 | Weak: unoriginal, clearly no meaningful correlation, data inappropriate to paired analysis, lack of effort. Or a correlation that indicates manufactured data. |

| 1 | Disaster area: data is not paired data, little to no effort made. |

| 19 | |

| points | statistics |

|---|---|

| 4 | sample data, sample description, source citation, citation in APA format |

| 4 | basics: n, min, max, range |

| 4 | middle: midrange, mode, median, mean |

| 2 | spread: standard deviation, coefficient of variation |

| 4 | standard error of the mean, tcritical, margin of error of the mean, 95% confidence interval |

| 18 |

| points | statistics |

|---|---|

| 3 | sample data from term start, sample data from term end, sampling procedure description paragraph: what was measured, when, how |

| 4 | basics: n, min, max, range (both samples) |

| 4 | middle: midrange, mode, median, mean (both samples) |

| 2 | spread: standard deviation, coefficient of variation (both samples) |

| 5 | t-test: null and alternate hypothesis, p-value, maximum confidence, and interpretation of result |

| 18 |

Depending on the instructor's preference, an alternative is a single ongoing term long project. Other designs, besides those included in this text, would also be appropriate.

Ratio level data is preferable. If you opt to work with nominal or ordinal level data, please meet with your instructor for guidance and advice on how to best proceed.

Ideas under consideration due at start of fourth week of class (5 points)

First draft due at midterm (25 points)

Second draft must be an integrated word processing document (max 30 points)

Final draft as integrated word processing document due roughly a week or two prior to the end of the term. (max 30 points)

Statistics to report in the first, second, and final draft vary according to the level of measurement of the projext. Ratio level data is preferred.

Note that at the nominal level, the data is usually reported in a frequency table. That is, the data table and the frequency table are one and the same table.

The items below will appear in the final draft. The corresponding material is not covered until after midterm. If confidence intervals are done by test two, then the second draft should include confidence intervals.

If you found two variable data, then perform a linear regression on the data. Report the slope, intercept, and correlation. Whether or not basic statistics should be reported for one of the variables depends on whether statistics such as the mode, median, and mean have a "meaning" to the study. In most cases the meaning or impact of the study is in the relationship (slope, intercept, r) and not in the basic statistics.

| Start of week four [5] | |

|---|---|

| 5 | Submission of description of project concept, potential sample and sampling method. Should include the appropriate answers to the who, what, where, when, why, and how questions concerning the project and the data to be gathered. In effect, a project topic statement. |

| First draft at test two/midterm [25] | ||

|---|---|---|

| 5 | Submission of description of project concept, potential sample and sampling method. Should include the appropriate answers to the who, what, where, when, why, and how questions concerning the data. What was measured? How was it measured? When? Where? If people were involved, who were they and why were they selected? Is the sample a good random sample or a convenience sample? | |

| 5 | Sample data recorded and reported | |

| Single variable x data | Two variable x,y data | |

| 5 | Basic statistics reported (see list above) | Slope, intercept, r, r² |

| 5 | Frequency table | XY Scattergraph |

| 5 | Histogram chart (done correctly) | |

| Second draft at test three: first draft requirements plus: [30] | |

|---|---|

| 5 | Turned in as a report done using word processing software, document is well laid out, text is double spaced, tables are single spaced, table columns are have head with label and units, table head aligned with data cell contents, tables are appropriately separated, tables and cells have borders |

| Final draft includes first and second draft requirements plus optional bonus: [35] | |

|---|---|

| +5 | For a study using unique and original data that required planning, forethought, and a sustained effort over time to acquire; data that has a variety of values, a study that has thoughtful implications; a thorough study that includes a discussion data outliers (if any) and potential future extensions or impacts of the study. |

This report would be done in a word processing program such as OpenOffice.org Writer or Microsoft Word, with tables and charts cut and pasted in from a spreadsheet program such as Calc or Excel. The report below includes material such as confidence intervals which would not appear until the second draft or final report.

Jump Rope Contest Statistical Report

Data gathered and analyzed by: Dana Lee Ling

| Jumps |

|---|

| 102 |

| 79 |

| 68 |

| 66 |

| 61 |

| 69 |

| 42 |

| 45 |

| 79 |

| 22 |

| 43 |

| 13 |

| 24 |

| 10 |

| 11 |

| 107 |

| 17 |

| 34 |

| 8 |

| 20 |

| 58 |

| 26 |

| 45 |

| 40 |

| 111 |

| 105 |

| 213 |

On Thursday 08 November 2007 a jump rope contest was held at a local elementary school festival. Contestants jumped with their feet together, a double-foot jump. The data seen in the table is the number of jumps for twenty-seven female jumpers. Participants jumped without stopping until they missed a jump, fouled on their rope, or stopped of their own accord. This was a solo jump rope contest, jumpers spun their own rope. The jumpers ranged in age from approximately six years old to twelve years old.

If a jumper made two or more attempts, only her highest number of jumps was retained in the data table. This was also the procedure used on the sheets of paper on the wall at the school on the day of the contest. Data was gathered by the author from the sheets on the wall, not from his own counts. The names of the jumpers were not recorded as the jumpers were minors. The table includes all of the young women who jumped that day.

Note that while the number of jumps data is discrete, the range and diversity of values permit treatment of the data as if it were continuous data. This data represents a convenience sample and is not a random sample of rope jumpers in general.

| Statistic | Value (Jumps) |

|---|---|

| 1. sample size n | 27 |

| 2. minimum | 8 |

| 3. maximum | 213 |

| 4. range | 205 |

| 5. midrange | 110.5 |

| 6. mode | 45 |

| 7. median | 45 |

| 8. sample mean x | 56.22 |

| 9. standard deviation sx | 44.65 |

| 10. coefficient of variation | 0.79 |

| 11. class width for five classes | 41 |

The large coefficient of variation indicates that the jump data is spread away from the mean. Jumpers are inconsistent in the number of jumps attained, the data shows a lot of variability.

The maximum of 213 jumps is an outlier (z-score = 3.51), an unusually high number of jumps for that day. A highly proficient jumper who had made a previous attempt and achieved 102 consecutive jumps returned and accomplished 213 consecutive jumps.

12. Histogram table

| Classes (x) | Frequency f | RF p(x) |

|---|---|---|

| 49 | 15 | 0.56 |

| 90 | 7 | 0.26 |

| 131 | 4 | 0.15 |

| 172 | 0 | 0.00 |

| 213 | 1 | 0.04 |

| Sums: | 27 | 1.00 |

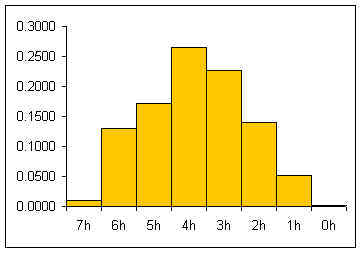

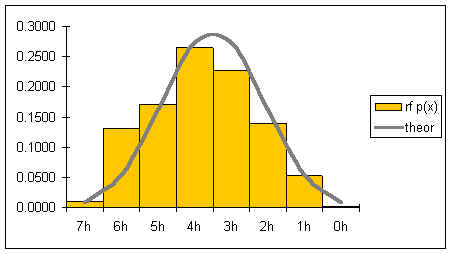

13. Histogram chart

14. Histogram shape: skewed right, bimodal

The skewed histogram illustrates that jump counts above 131 jumps are very rare events.

| Statistic | Value (Jumps) |

|---|---|

| 15. Standard error SE | 8.59 |

| 16. tcritical for a confidence level of 95%: | 2.06 |

| 17. Margin of error E: | 17.66 |

| 18.The 95% confidence interval for this data is: | 38.56 ≤ μ ≤ 73.88 |

The population mean can be estimated to be in the range between 39 and 74 jumps. Observations suggested that faster jumpers achieved higher jump counts than slower jumpers. Future research could examine whether the jump rate is correlated to the total number of jumps.

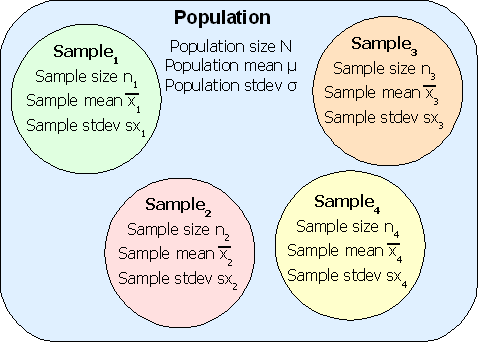

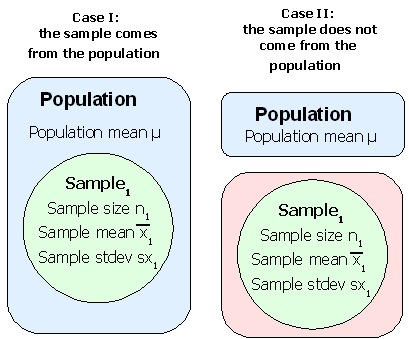

Statistics studies groups of people, objects, or data measurements and produces summarizing mathematical information on the groups. The groups are usually not all of the possible people, objects, or data measurements. The groups are called samples. The larger collection of people, objects or data measurements is called the population.

Statistics attempts to predict measurements for a population from measurements made on the smaller sample. For example, to determine the average weight of a student at the college, a study might select a random sample of fifty students to weigh. Then the measured average weight could be used to estimate the average weight for all student at the college. The fifty students would be the sample, all students at the college would be the population.

Population: The complete group of elements, objects, observations, or people.

• Parameters: Measurements of the population.

Sample: A part of the population. A sample is usually more than five measurements, observations, objects, or people, and smaller than the complete population.

• Statistics: Measurements of a sample.

Examples

We could use the ratio of females to males in a class to estimate the ratio of females to males on campus. The sample is the class. The population is all students on campus.

We could use the average body fat index for a randomly selected group of females between the ages of 18 and 22 on campus to determine the average body fat index for females in the FSM between the ages of 18 and 22. The sample is those females on campus that we've measured. The population is all females between the ages of 18 and 22 in the FSM.

Measurements are made of individual elements in a sample or population. The elements could be objects, animals, or people.

Sample size n

The sample size is the number of elements or measurements in a sample. The lower case letter n is used for sample size. If the population size is being reported, then an upper case N is used. The spreadsheet function for calculating the sample size is the COUNT function.

=COUNT(data)

Qualitative data refers to descriptive measurements, typically non-numerical.

Quantitative data refers to numerical measurements. Quantitative data can be discrete or continuous.

| Type | Subtype | Level of measurement | Definition | Examples |

|---|---|---|---|---|

| Qualitative | Nominal | In name only | Sorting by categories such as red, orange, yellow, green, blue, indigo, violet | |

| Q u a n t i t a t i v e |

Discrete | Ordinal | In rank order, there exists an order but differences and ratios have no meaning | Grading systems: A, B, C, D, F Sakau market rating system where the number of cups until one is pwopihda... |

| Continuous | Interval | Differences have meaning, but not ratios. There is either no zero or the zero has no mathematical meaning. | The numbering of the years: 2001, 2000, 1999. The year 2000 is 1000 years after 1000 A.D. (the difference has meaning), but it is NOT twice as many years (the ratio has no meaning). Someone born in 1998 is eight years younger than someone born in 1990: 1998 − 1990. A vase made in 2000 B.C., however, is not twice as old as a vase made in 1000 B.C. The complication is subtle and basically can stem from two sources: either there is no zero or the zero is not a true zero. The Fahrenheit and Celsius temperature systems both suffer from the later defect. | |

| Ratio | Difference and ratios have meaning. There is a mathematically meaningful zero | Physical quantities: distance, height, speed, velocity, time in seconds, altitude, acceleration, mass,... 100 kg is twice as heavy as 50 kg. Ten dollars is 1/10 of $100. | ||

Descriptive statistics: Numerical or graphical representations of samples or populations. Can include numerical measures such as mode, median, mean, standard deviation. Also includes images such as graphs, charts, visual linear regressions.

Inferential statistics: Using descriptive statistics of a sample to predict the parameters or distribution of values for a population.

The number of measurements, elements, objects, or people in a sample is the sample size n. A simple random sample of n measurements from a population is one selected in a way that:

Ensuring that a sample is random is difficult. Suppose I want to study how many Pohnpeians own cars. Would people I meet/poll on main street Kolonia be a random sample? Why? Why not?

Studies often use random numbers to help randomly selects objects or subjects for a statistical study. Obtaining random numbers can be more difficult than one might at first presume.

Computers can generate pseudo-random numbers. "Pseudo" means seemingly random but not truly random. Computer generated random numbers are very close to random but are actually not necessarily random. Next we will learn to generate pseudo-random numbers using a computer. This section will also serve as an introduction to functions in spreadsheets.

Coins and dice can be used to generate random numbers.

This course presumes prior contact with a course such as CA 100 Computer Literacy where a basic introduction to spreadsheets is made.

The random function RAND generates numbers between 0 and 0.9999...

=rand()

The random number function consists of a function name, RAND, followed by parentheses. For the random function nothing goes between the parentheses, not even a space.

To get other numbers the random function can be multiplied by coefficient. To get whole numbers the integer function INT can be used to discard the decimal portion.

=INT(argument)

The integer function takes an "argument." The argument is a computer term for an input to the function. Inputs could include a number, a function, a cell address or a range of cell addresses. The following function when typed into a spreadsheet that mimic the flipping of a coin. A 1 will be a head, a 0 will be a tail.

=INT(RAND()*2)

The spreadsheet can be made to display the word "head" or "tail" using the following code:

=CHOOSE(INT(RAND()*2),"head","tail")

A single die can also be simulated using the following function

=INT(6*RAND()+1)

To randomly select among a set of student names, the following model can be built upon.

=CHOOSE(INT(RAND()*5+1),"Jan","Jen","Jin","Jon","Jun")

To generate another random choice, press the F9 key on the keyboard. F9 forces a spreadsheet to recalculate all formulas.

When practical, feasible, and worth both the cost and effort, measurements are done on the whole population. In many instances the population cannot be measured. Sampling refers to the ways in which random subgroups of a population can be selected. Some of the ways are listed below.

Census: Measurements done on the whole population.

Sample: Measurements of a representative random sample of the population.

Today this often refers to constructing a model of a system using mathematical equations and then using computers to run the model, gathering statistics as the model runs.

To ensure a balanced sample: Suppose I want to do a study of the average body fat of young people in the FSM using students in the statistics course. The FSM population is roughly half Chuukese, but in the statistics course only 12% of the students list Chuuk as their home state. Pohnpei is 35% of the national population, but the statistics course is more than half Pohnpeian at 65%. If I choose as my sample students in the statistics course, then I am likely to wind up with Pohnpeians being over represented relative to the actual national proportion of Pohnpeians.

| State | 2010 Population | Fractional share of national population (relative frequency) | Statistics students by state of origin spring 2011 | Fractional share of statistics seats |

|---|---|---|---|---|

| Chuuk | 48651 | 0.47 | 10 | 0.12 |

| Kosrae | 6616 | 0.06 | 7 | 0.09 |

| Pohnpei | 35981 | 0.35 | 53 | 0.65 |

| Yap | 11376 | 0.11 | 12 | 0.15 |

| 102624 | 1.00 | 82 | 1.00 |

The solution is to use stratified sampling. I ensure that my sample subgroups reflect the national proportions. Given that the sample size is small, I could choose to survey all ten Chuukese students, seven Pohnpeian students, two Yapese students, and one Kosraean student. There would still be statistical issues of the small subsample sizes from each state, but the ratios would be closer to that seen in the national population. Each state would be considered a single strata.

Used where a population is in some sequential order. A start point must be randomly chosen. Useful in a measuring a timed event. Never used if there is a cyclic or repetitive nature to a system: If the sample rate is roughly equal to the cycle rate, then the results are not going to be randomly distributed measurements. For example, suppose one is studying whether the sidewalks on campus are crowded. If one measures during the time between class periods when students are moving to their next class - then one would conclude the sidewalks are crowded. If one measured only when classes were in session, then one would conclude that there is no sidewalk crowding problem. This type of problem in measurement occurs whenever a system behaves in a regular, cyclical manner. The solution would be ensure that the time interval between measurements is random.

The population is divided into naturally occurring subunits and then subunits are randomly selected for measurement. In this method it is important that subunits (subgroups) are fairly interchangeable. Suppose we want to poll the people in Kitti's opinion on whether they would pay for water if water was guaranteed to be clean and available 24 hours a day. We could cluster by breaking up the population by kosapw and then randomly choose a few kosapws and poll everyone in these kosapws. The results could probably be generalized to all Kitti.

Results or data that are easily obtained is used. Highly unreliable as a method of getting a random samples. Examples would include a survey of one's friends and family as a sample population. Or the surveys that some newspapers and news programs produce where a reporter surveys people shopping in a store.

In science, statistics are gathered by running an experiment and then repeating the experiment. The sample is the experiments that are conducted. The population is the theoretically abstract concept of all possible runs of the experiment for all time.

The method behind experimentation is called the scientific method. In the scientific method, one forms a hypothesis, makes a prediction, formulates an experiment, and runs the experiment.

Some experiments involve new treatments, these require the use of a control group and an experimental group, with the groups being chosen randomly and the experiment run double blind. Double blind means that neither the experimenter nor the subjects know which treatment is the experimental treatment and which is the control treatment. A third party keeps track of which is which usually using number codes. Then the results are tested for a statistically significant difference between the two groups.

Placebo effect: just believing you will improve can cause improvement in a medical condition.

Replication is also important in the world of science. If an experiment cannot be repeated and produce the same results, then the theory under test is rejected.

Some of the steps in an experiment are listed below:

Observational studies gather statistics by observing a system in operation, or by observing people, animals, or plants. Data is recorded by the observer. Someone sitting and counting the number of birds that land or take-off from a bird nesting islet on the reef is performing an observational study.

Surveys are usually done by giving a questionnaire to a random sample. Voluntary responses tend to be negative. As a result, there may be a bias towards negative findings. Hidden bias/unfair questions: Are you the only crazy person in your family?

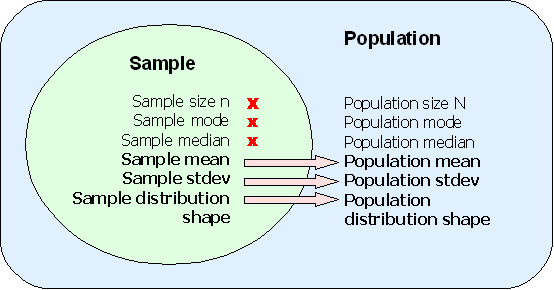

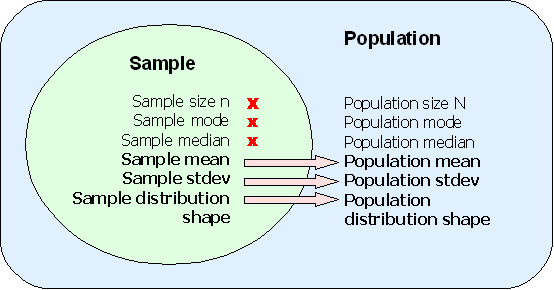

The process of extending from sample results to population. If a sample is a good random sample, representative of the population, then some sample statistics can be used to estimate population parameters. Sample means and proportions can often be used as point estimates of a population parameter.

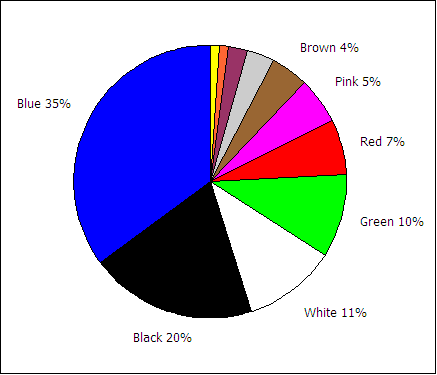

Although the mode and median, covered in chapter three, do not always well predict the population mode and median, there there situations in which a mode may be used. If a good, random, and representative sample of students finds that the color blue is the favorite color for the sample, then blue is a best first estimate of the favorite color of the population of students or any future student sample.

| Favorite color | Frequency f | Relative Frequency or p(color) |

|---|---|---|

| Blue | 32 | 35% |

| Black | 18 | 20% |

| White | 10 | 11% |

| Green | 9 | 10% |

| Red | 6 | 7% |

| Pink | 5 | 5% |

| Brown | 4 | 4% |

| Gray | 3 | 3% |

| Maroon | 2 | 2% |

| Orange | 1 | 1% |

| Yellow | 1 | 1% |

| Sums: | 91 | 100% |

If the above sample of 91 students is a good random sample of the population of all students, then we could make a point estimate that roughly 35% of the students in the population will prefer blue.

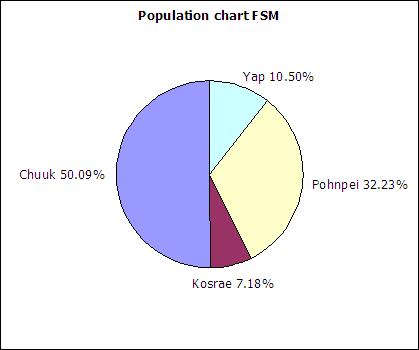

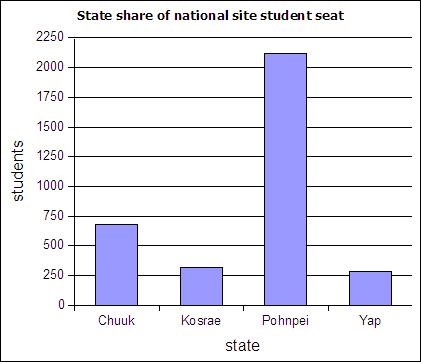

The table below includes FSM census 2000 data and student seat numbers for the national site of COM-FSM circa 2004.

| State | Population (2000) | Fractional share of national population (relative frequency) | Number of student seats held by state at the national campus | Fractional share of the national campus student seats |

|---|---|---|---|---|

| Chuuk | 53595 | 0.5 | 679 | 0.2 |

| Kosrae | 7686 | 0.07 | 316 | 0.09 |

| Pohnpei | 34486 | 0.32 | 2122 | 0.62 |

| Yap | 11241 | 0.11 | 287 | 0.08 |

| 107008 | 1 | 3404 | 1 |

In a circle chart the whole circle is 100% Used when data adds to a whole, e.g. state populations add to yield national population.

A pie chart of the state populations:

The following table includes data from the 2010 FSM census as an update to the above data.

| State | Population (2010) | Relative frequency |

|---|---|---|

| Chuuk | 48651 | |

| Kosrae | 6616 | |

| Pohnpei | 35981 | |

| Yap | 11376 | |

| Sum: | 102624 |

Column charts are also called bar graphs. A column chart of the student seats held by each state at the national site:

If a column chart is sorted so that the columns are in descending order, then it is called a Pareto chart. Descending order means the largest value is on the left and the values decrease as one moves to the right. Pareto charts are useful ways to convey rank order as well as numerical data.

A line graph is a chart which plots data as a line. The horizontal axis is usually set up with equal intervals. Line graphs are not used in this course and should not be confused with xy scattergraphs.

When you have two sets of continuous data (value versus value, no categories), use an xy graph. These will be covered in more detail in the chapter on linear regressions.

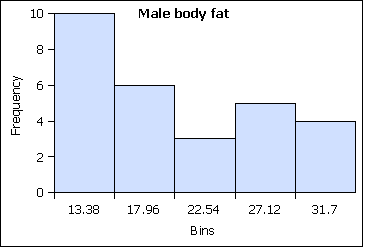

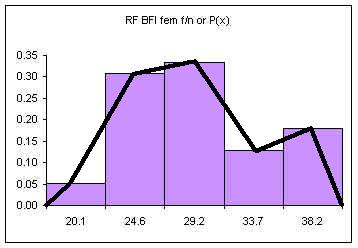

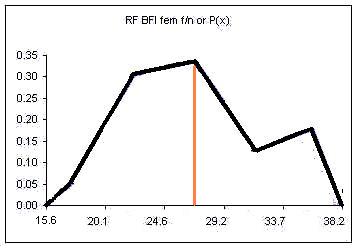

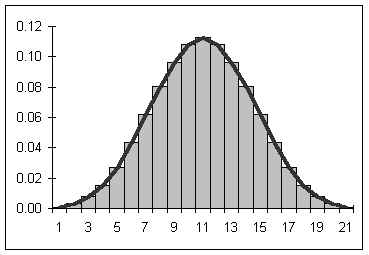

A distribution counts the number of elements of data in either a category or within a range of values. Plotting the count of the elements in each category or range as a column chart generates a chart called a histogram. The histogram shows the distribution of the data. The height of each column shows the frequency of an event. This distribution often provides insight into the data that the data itself does not reveal. In the histogram below, the distribution for male body fat among statistics students has two peaks. The two peaks suggest that there are two subgroups among the men in the statistics course, one subgroup that is at a healthy level of body fat and a second subgroup at a higher level of body fat.

The ranges into which values are gathered are called bins, classes, or intervals. This text tends to use classes or bins to describe the ranges into which the data values are grouped.

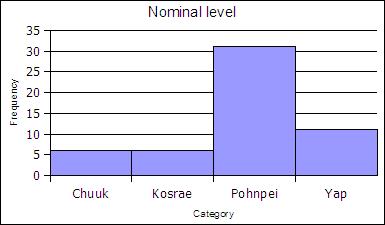

At the nominal level of measurement one can determine the frequency of elements in a category, such as students by state in a statistics course.

| State | Frequency | Rel Freq |

|---|---|---|

| Chuuk | 6 | 0.11 |

| Kosrae | 6 | 0.11 |

| Pohnpei | 31 | 0.57 |

| Yap | 11 | 0.20 |

| Sums: | 54 | 1,00 |

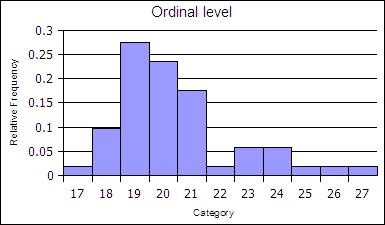

At the ordinal level, a frequency distribution can be done using the rank order, counting the number of elements in each rank order to obtain a frequency. When the frequency data is calculated in this way, the distribution is not grouped into a smaller number of classes.

| Age | Frequency | Rel Freq |

|---|---|---|

| 17 | 1 | 0.02 |

| 18 | 5 | 0.1 |

| 19 | 14 | 0.27 |

| 20 | 12 | 0.24 |

| 21 | 9 | 0.18 |

| 22 | 1 | 0.02 |

| 23 | 3 | 0.06 |

| 24 | 3 | 0.06 |

| 25 | 1 | 0.02 |

| 26 | 1 | 0.02 |

| 27 | 1 | 0.02 |

| sums | 51 | 1 |

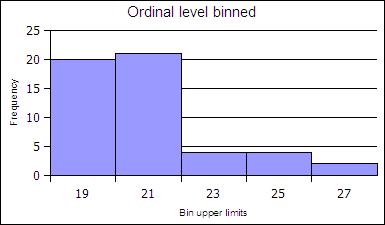

The ranks can be collected together, classed, to reduce the number of rank order categories. in the example below the age data in gathered into two-year cohorts.

| Age | Frequency | Rel Freq |

|---|---|---|

| 19 | 20 | 0.39 |

| 21 | 21 | 0.41 |

| 23 | 4 | 0.08 |

| 25 | 4 | 0.08 |

| 27 | 2 | 0.04 |

| Sums: | 51 | 1 |

At the ratio level data is always gathered into ranges. At the ratio level, classed histograms are used. Ratio level data is not necessarily in a finite number of ranks as was ordinal data.

The ranges into which data is gathered are defined by a class lower limit and a class upper limit. The width is the class upper limit minus the class lower limit. The frequency function in spreadsheets uses class upper limits. In this text histograms are also generated using the class upper limits.

To calculate the class lower and upper limits the minimum and maximum value in a data set must be determined. Spreadsheets include functions to calculate the minimum value MIN and maximum value MAX in a data set.

=MIN(data)

=MAX(data)

In LibreOffice the MIN and MAX function can take a list of comma separated numbers or a range of cells in a spreadsheet. In statistics a range of cells is the most common input for these functions. When a range of cells is the usual input, this text uses the word "data" to refer to the fact that the range of cells is usually your data! Ranges of cells use two cell addresses separated by a full colon. An example is shown below where the data is arranged in a vertical column from A2 to A42. Sort the original data from smallest to largest before you begin!

=MIN(A2:A42)

How to make a frequency table at the ratio level

range = maximum value - minimum value| Class Upper Limits (CUL) | Frequency |

| =min + class width | |

| + class width | |

| + class width | |

| + class width | |

| + class width = max |

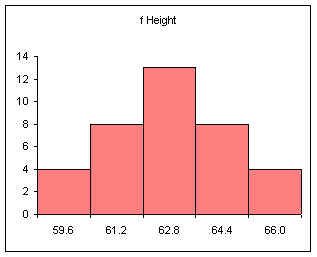

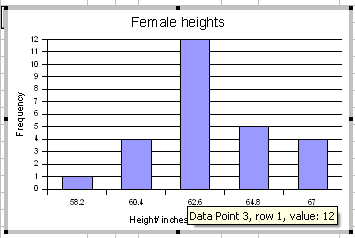

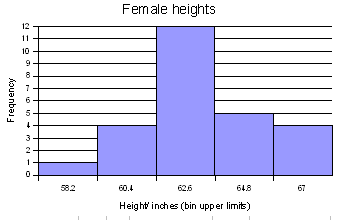

For the female height data:

58, 58, 59.5, 59.5, 60, 60, 60, 60, 60, 61, 61, 61.2, 61.5, 62, 62, 62, 62, 62, 62, 62, 62, 62, 62, 62, 62, 63, 63, 63, 63.5, 64, 64, 64, 64, 65, 65, 66, 66

Five classes would produce the following results:

Min = 58

Max = 66

Range = 66 - 58 = 8

Width = 8/5 = 1.6

| Calculation | Height (CUL) | Frequency |

|---|---|---|

| 58 + 1.6 | 59.6 | 4 |

| 59.6 + 1.6 | 61.2 | 8 |

| 61.2 + 1.6 | 62.8 | 13 |

| 62.8 + 1.6 | 64.4 | 8 |

| 64.4 + 1.6 | 66 | 4 |

| Sum: | 37 |

Note that 61.2 is INCLUDED in the class that ends at 61.2. The class includes values at the class upper limit. In other words, a class includes all values up to and including the class upper limit.

Note too that the frequencies add to the sample size.

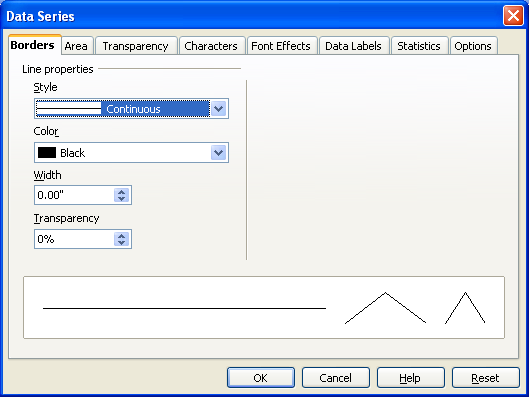

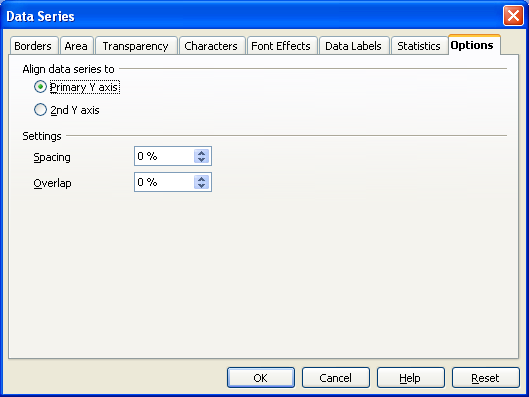

After making the column chart, double click on the columns to open the data series dialog box. Find the Options tab and set the spacing (or gap width) to zero.

Note that the spacing or gap width on the columns has been set to zero.

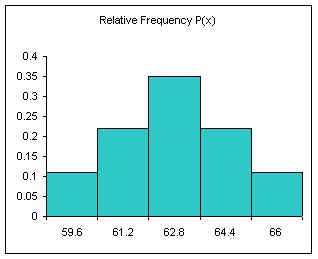

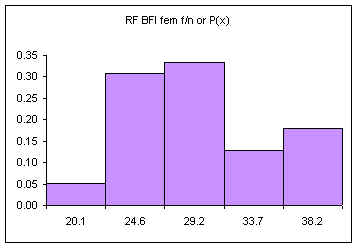

Relative frequency is one way to determine a probability.

Divide each frequency by the sum (the sample size) to get the relative frequency

| Height CUL | Frequency | Relative Frequency f/n or P(x) |

|---|---|---|

| 59.6 | 4 | 0.11 |

| 61.2 | 8 | 0.22 |

| 62.8 | 13 | 0.35 |

| 64.4 | 8 | 0.22 |

| 66 | 4 | 0.11 |

| Sum: | 37 | 1.00 |

The relative frequency always adds to one (rounding causes the above to add to 1.01, if all the decimal places were used the relative frequencies would add to one.

The area under the relative frequency columns is equal to one.

Another example using integers:

0, 1, 2, 2, 3, 3, 3, 4, 4, 4, 4.5, 5, 5, 5, 6, 6, 7, 8, 9, 10

Five classes

min = 0

max = 10

range = 10

width = 10/5 = 2

| Class Num | Calculation | CUL | Frequency | Relative Frequency f/n or P(x) |

|---|---|---|---|---|

| 1 | min + width | 2 | 4 | 0.20 |

| 2 | + width | 4 | 6 | 0.30 |

| 3 | + width | 6 | 6 | 0.30 |

| 4 | + width | 8 | 2 | 0.10 |

| 5 | + width | 10 | 2 | 0.10 |

| Sum: | 20 | 1.00 |

The above method produces equal width classes and to conforms the inclusion of the class upper limit by spreadsheet packages.

The final class upper limit must be equal to the maximum value in the data set. The frequencies must sum to the sample size n. The relative frequencies must add to 1.00.

| CUL | Frequency | Relative Frequency f/n |

|---|---|---|

| min + width | ||

| + width | ||

| + width | ||

| + width | ||

| + width = MAX | ||

| Sum: | sample size n | 1.00 |

For more advanced spreadsheet users, frequency data can be obtained using the frequency function FREQUENCY. This function is also very useful when working with large data sets. The frequency function is:

=FREQUENCY(DATA,CLASSES)

DATA refers to the range of cells containing the data, CLASSES refers to the range of cells containing the class upper limits.

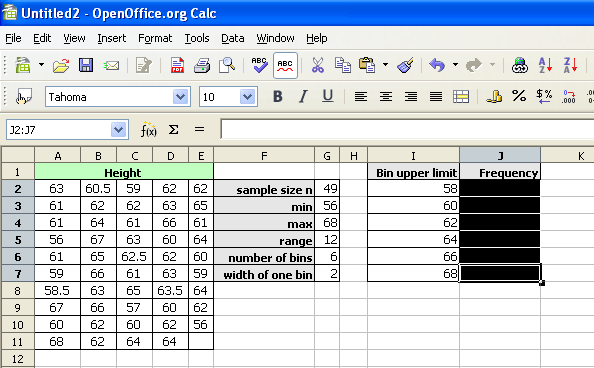

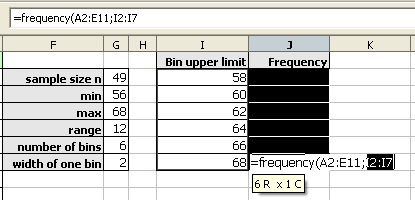

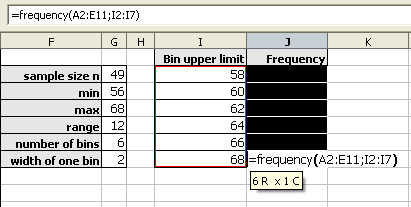

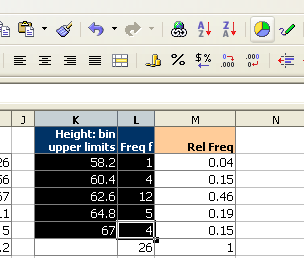

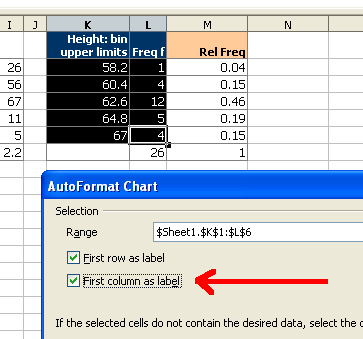

The data set seen below are the height measurements for 49 female students in statistics courses during two consecutive terms.

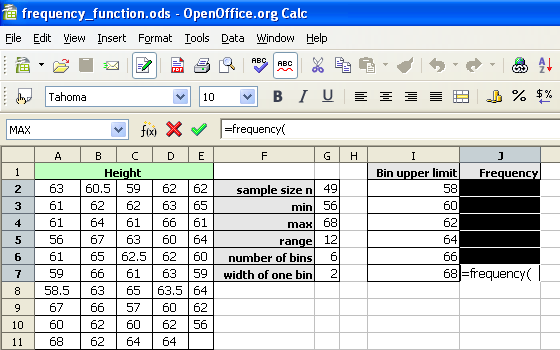

The frequency function built into spreadsheets works very differently from all other functions. The frequency function called an "array" function because the function places values into an array of cells. For the function to do this, you must first select the cells into which the function will place the frequency values.

With the cells still highlighted, start typing the frequency function.

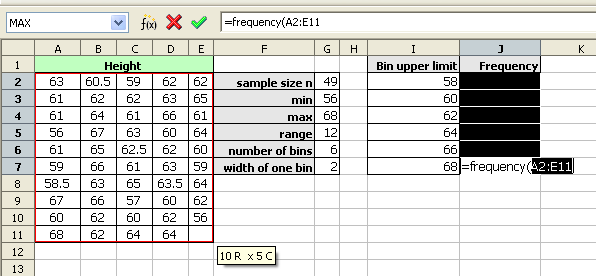

After typing the opening parenthesis, drag and select the data to be classed. If the data is more than can be selected by dragging, type the data range in by hand.

The frequency function usually uses a comma, not a semi-colon as seen in the image below.

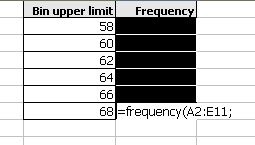

Drag and select the class upper limits.

Type the closing parenthesis.

Then press and hold down BOTH the CONTROL (Ctrl) key and the SHIFT key. With both the control and shift keys held down, press the Enter (or Return) key.

As noted above, the frequencies should add to the sample size. When working with spreadsheets, internal rounding errors can cause the maximum value in a data set to not get included in the final class. In the last class, use the value obtained by the MAX function and not the previous class + a width formula to generate that class upper limit.

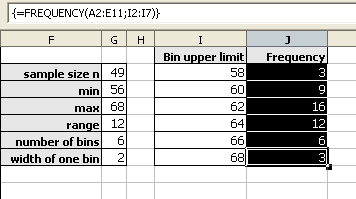

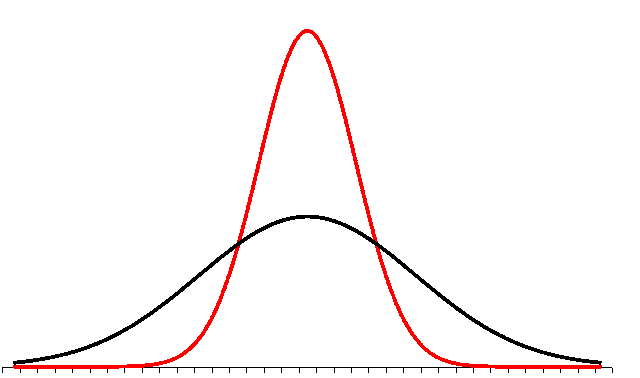

The shapes of distributions have names by which they are known.

One of the aspects of a sample that is often similar to the population is the shape of the distribution. If a good random sample of sufficient size has a symmetric distribution, then the population is likely to have a symmetric distribution. The process of projecting results from a sample to a population is called generalizing. Thus we can say that the shape of a sample distribution generalizes to a population.

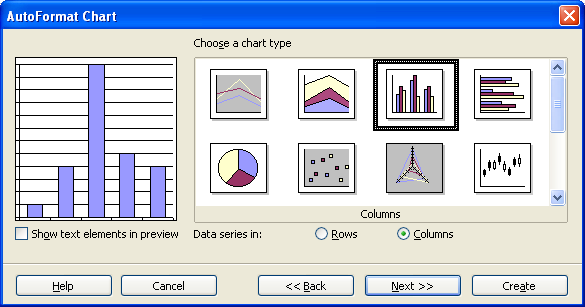

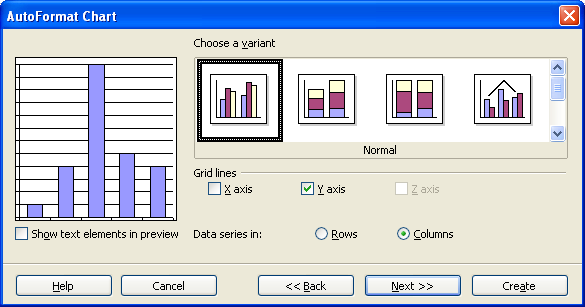

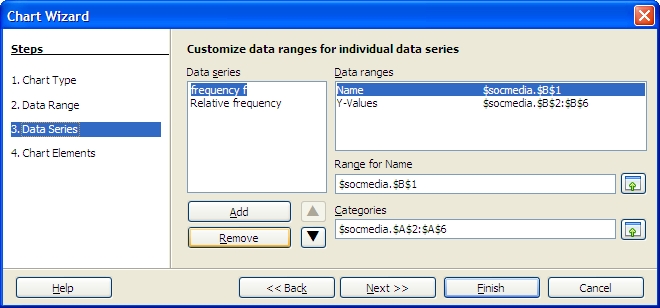

Select both the column with the class and the column with the frequencies.

Click on the chart wizard button and then drag the mouse to place and size the histogram.

At the first dialog box be sure to click on the "First column as label" check box as indicated by the arrow in the diagram below.

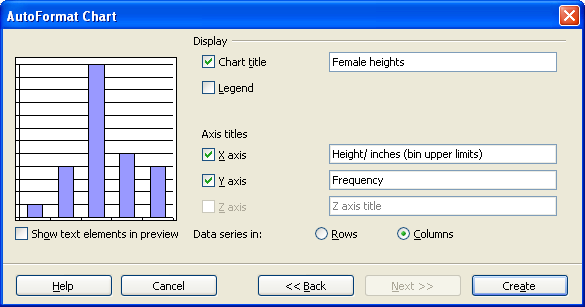

For the next two screens simply click on "Next"

On the next screen fill in the appropriate titles. The legend can be "unchecked" as seen below.

When done, click on Create.

Double click any column to open up the data series dialog box.

Click on the options tab and set the spacing to zero.

Click on OK.

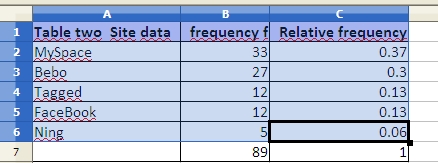

The chart wizard for OpenOffice.org 3.1 running on Ubuntu 9.10 will not produce a two-dimensional column chart from a "split selection." This complicates producing a relative frequency chart. To produce a relative frequency chart, select all three columns and then delete the frequency column.

In step three, remove the frequency series to chart only the relative frequency.

The mode is the value that occurs most frequently in the data. Spreadsheet programs such as Microsoft Excel or OpenOffice.org Calc can determine the mode with the function MODE.

=MODE(data)

In the Fall of 2000 the statistics class gathered data on the number of siblings for each member of the class. One student was an only child and had no siblings. One student had 13 brothers and sisters. The complete data set is as follows:

1,2,2,2,2,2,3,3,4,4,4,5,5,5,7,8,9,10,12,12,13

The mode is 2 because 2 occurs more often than any other value. Where there is a tie there is no mode.

For the ages of students in that class

18,19,19,20,20,21,21,21,21,22,22,22,22,23,23,24,24,25,25,26

...there is no mode: there is a tie between 21 and 22, hence there no single must frequent value. Spreadsheets will, however, usually report a mode of 21 in this case. Spreadsheets often select the first mode in a multi-modal tie.

If all values appear only once, then there is no mode. Spreadsheets will display #N/A or #VALUE to indicate an error has occurred - there is no mode. No mode is NOT the same as a mode of zero. A mode of zero means that zero is the most frequent data value. Do not put the number 0 (zero) for "no mode." An example of a mode of zero might be the number of children for students in statistics class.

The median is the central (or middle) value in a data set. If a number sits at the middle, then it is the median. If the middle is between two numbers, then the median is half way between the two middle numbers.

For the sibling data...

1,2,2,2,2,2,3,3,4,4,|4|,5,5,5,7,8,9,10,12,12,13

...the median is 4.

Note the data must be in order (sorted) before you can find the median. For the data 2, 4, 6, 8 the median is 5: (4+6)/2.

The median function in spreadsheets is MEDIAN.

=MEDIAN(data)

The mean, also called the arithmetic mean and also called the average, is calculated mathematically by adding the values and then dividing by the number of values (the sample size n).

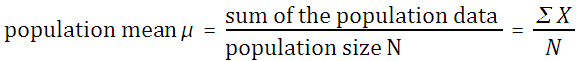

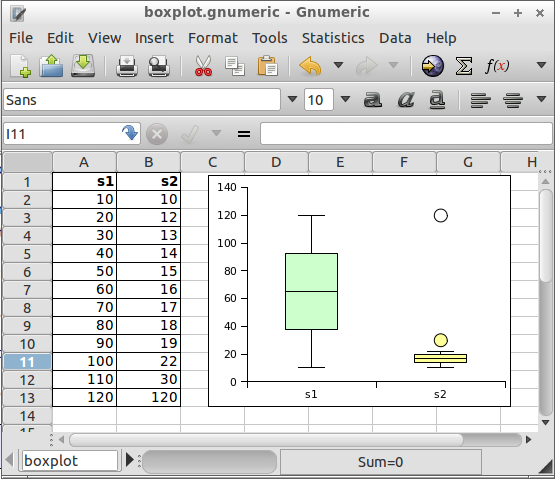

If the mean is the mean of a population, then it is called the population mean μ. The letter μ is a Greek lower case "m" and is pronounced "mu."

If the mean is the mean of a sample, then it is the sample mean x. The symbol x is pronounced "x bar."

The sum of the data ∑ x can be determined using the function =SUM(data). The sample size n can be determined using =COUNT(data). Thus =SUM(data)/COUNT(data) will calculate the mean. There is also a single function that calculates the mean. The function that directly calculates the mean is AVERAGE

=AVERAGE(data)

Resistant measures: One that is not influenced by extremely high or extremely low data values. The median tends to be more resistant than mean.

If the mean is measured using the whole population then this would be the population mean. If the mean was calculated from a sample then the mean is the sample mean. Mathematically there is no difference in the way the population and sample mean are calculated.

The midrange is the midway point between the minimum and the maximum in a set of data.

To calculate the minimum and maximum values, spreadsheets use the minimum value function MIN and maximum value function MAX.

=MIN(data)

=MAX(data)

The MIN and MAX function can take a list of comma separated numbers or a range of cells in a spreadsheet. If the data is in cells A2 to A42, then the minimum and maximum can be found from:

=MIN(A2:A42)

=MAX(A2:A42)

The midrange can then be calculated from:

midrange = (maximum + minimum)/2

In a spreadsheet use the following formula:

=(MAX(data)+MIN(data))/2

The range is the maximum data value minus the minimum data value.

=MAX(data)−MIN(data)

The range is a useful basic statistic that provides information on the distance between the most extreme values in the data set.

The range does not show if the data if evenly spread out across the range or crowded together in just one part of the range. The way in which the data is either spread out or crowded together in a range is referred to as the distribution of the data. One of the ways to understand the distribution of the data is to calculate the position of the quartiles and making a chart based on the results.

The median is the value that is the middle value in a sorted list of values. At the median 50% of the data values are below and 50% are above. This is also called the 50th percentile for being 50% of the way "through" the data.

If one starts at the minimim, 25% of the way "through" the data, the point at which 25% of the values are smaller, is the 25th percentile. The value that is 25% of the way "through" the data is also called the first quartile.

Moving on "through" the data to the median, the median is also called the second quartile.

Moving past the median, 75% of the way "through" the data is the 75th percentile also known as the third quartile.

Note that the 0th percentile is the minimum and the 100th percentile is the maximum.

Spreadsheets can calculate the first, second, and third quartile for data using a function, the quartile function.

=QUARTILE(data,type)

Data is a range with data. Type represents the type of quartile. (0 = minimum, 1 = 25% or first quartile, 2 = 50% (median), 3 = 75% or third quartile and 4 = maximum. Thus if data is in the cells A1:A20, the first quartile could be calculated using:

=QUARTILE(A1:A20,1)

The InterQuartile Range (IQR) is the range between the first and third quartile:

=QUARTILE(Data,3)-QUARTILE(Data,1)

There are some subtleties to calculating the IQR for sets with even versus odd sample sizes, but this text leaves those details to the spreadsheet software functions.

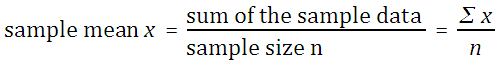

The above is very abstract and hard to visualize. A box and whisker plot takes the above quartile information and plots a chart based on the quartiles.

A box and whisker plot is built around a box that runs from the value at the 25th percentile (first quartile) to the value at the 75th percentile (third quartile). The length of the box spans the distance from the value at the first quartile to the third quartile, this is called the Inter-Quartile Range (IQR). A line is drawn inside the box at the location of the 50th percentile. The 50th percentile is also known as the second quartile and is the median for the data. Half the scores are above the median, half are below the median. Note that the 50th percentile is the median, not the mean.

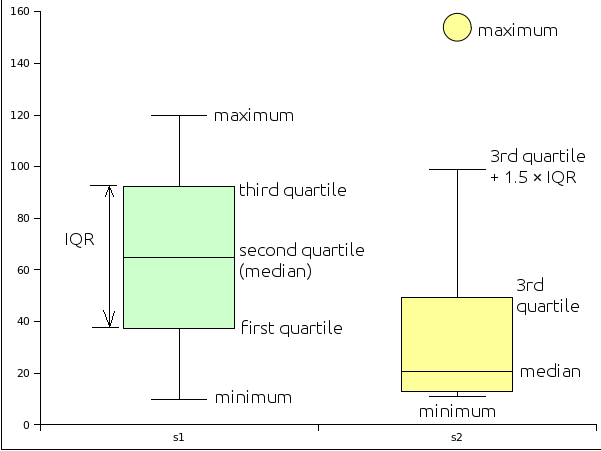

| s1 | s2 |

|---|---|

| 10 | 11 |

| 20 | 11 |

| 30 | 12 |

| 40 | 13 |

| 50 | 15 |

| 60 | 18 |

| 70 | 23 |

| 80 | 31 |

| 90 | 44 |

| 100 | 65 |

| 110 | 99 |

| 120 | 154 |

The basic box plot described above has lines that extend from the first quartile down to the minimum value and from the third quartile to the maximum value. These lines are called "whiskers" and end with a cross-line called a "fence". If, however, the minimum is more than 1.5 × IQR below the first quartile, then the lower fence is put at 1.5 × IQR below the first quartile and the values below the fence are marked with a round circle. These values are referred to as potential outliers - the data is unusually far from the median in relation to the other data in the set.

Likewise, if the maximum is more than 1.5 × IQR beyond the third quartile, then the upper fence is located at 1.5 × IQR above the 3rd quartile. The maximum is then plotted as a potential outlier along with any other data values beyond 1.5 × IQR above the 3rd quartile.

There are actually two types of outliers. Potential outliers between 1.5 × IQR and 3.0 × IQR beyond the fence . Extreme outliers are beyond 3.0 × IQR. In the program Gnome Gnumeric potential outliers are marked with a circle colored in with the color of the box. Extreme outiers are marked with an open circle - a circle with no color inside.

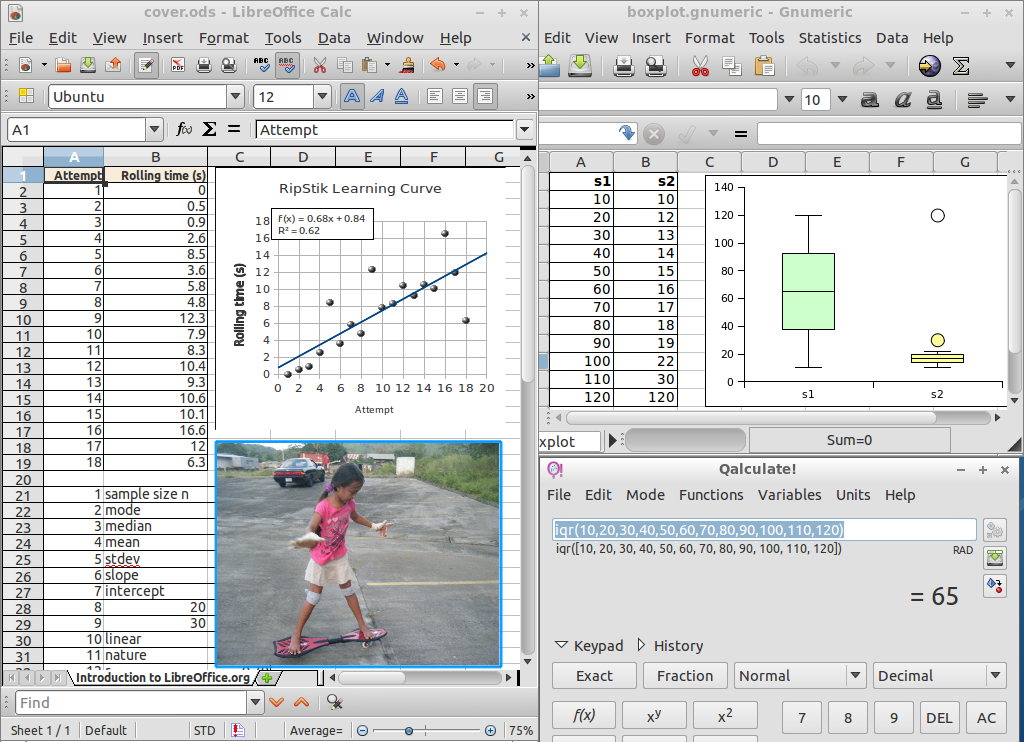

An example with hypothetical data sets is given to illustrate box plots. The data consists of two samples. Sample one (s1) is a uniform distribution and sample two (s2) is a highly skewed distribution.

Box and whisker plots can be generated by the Gnome Gnumeric program or by using on line box plot generators.

The box and whisker plot is a useful tool for exploring data and determining whether the data is symmetrically distributed, skewed, and whether the data has potential outliers - values far from the rest of the data as measured by the InterQuartile Range. The distribution of the data often impacts what types of analysis can be done on the data.

The distribution is also important to determining whether a measurement that was done is performing as intended. For example, in education a "good" test is usually one that generates a symmetric distibution of scores with few outliers. A highly skewed distribution of scores would suggest that the test was either too easy or too difficult. Outliers would suggest unusual performances on the test.

Two data sets, one uniform, the other with one potential outlier and one extreme outlier.

Consider the following data:

| Data | mode | median | mean μ | min | max | range | midrange | |

|---|---|---|---|---|---|---|---|---|

| Data set 1 | 5, 5, 5, 5 | 5 | 5 | 5 | 5 | 5 | 0 | 0 |

| Data set 2 | 2, 4, 6, 8 | none | 5 | 5 | 2 | 8 | 6 | 5 |

| Data set 3 | 2, 2, 8, 8 | none | 5 | 5 | 2 | 8 | 6 | 5 |

Neither the mode, median, nor the mean reveal clearly the differences in the distribution of the data above. The mean and the median are the same for each data set. The mode is the same as the mean and the median for the first data set and is unavailable for the last data set (spreadsheets will report a mode of 2 for the last data set). A single number that would characterize how much the data is spread out would be useful.

As noted earlier, the range is one way to capture the spread of the data. The range is calculated by subtracting the smallest value from the largest value. In a spreadsheet:

=MAX(data)−MIN(data)

The range still does not characterize the difference between set 2 and 3: the last set has more data further away from the center of the data distribution. The range misses this difference.

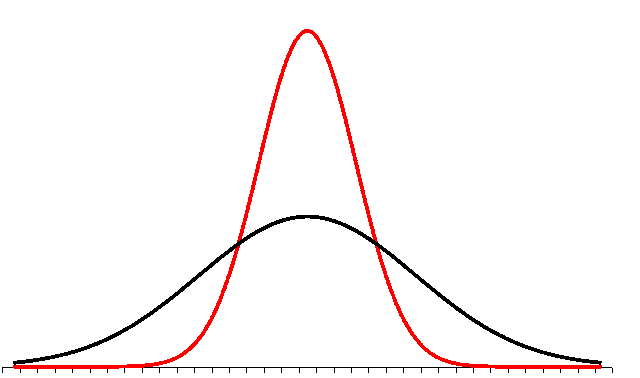

To capture the spread of the data we use a measure related to the average distance of the data from the mean. We call this the standard deviation. If we have a population, we report this average distance as the population standard deviation. If we have a sample, then our average distance value may underestimate the actual population standard deviation. As a result the formula for sample standard deviation adjusts the result mathematically to be slightly larger. For our purposes these numbers are calculated using spreadsheet functions.

One way to distinguish the difference in the distribution of the numbers in data set 2 and data set 3 above is to use the standard deviation.

| Data | mean μ | stdev | |

|---|---|---|---|

| Data set 1 | 5, 5, 5, 5 | 5 | 0.00 |

| Data set 2 | 2, 4, 6, 8 | 5 | 2.58 |

| Data set 3 | 2, 2, 8, 8 | 5 | 3.46 |

The function that calculates the sample standard deviation is:

=STDEV(data)

In this text the symbol for the sample standard deviation is usually sx.

In this text the symbol for the population standard deviation is usually σ.

The symbol sx usually refers the standard deviation of single variable x data. If there is y data, the standard deviation of the y data is sy. Other symbols that are used for standard deviation include s and σx. Some calculators use the unusual and confusing notations σxn−1 and σxn for sample and population standard deviations.

In this class we always use the sample standard deviation in our calculations. The sample standard deviation is calculated in a way such that the sample standard deviation is slightly larger than the result of the formula for the population standard deviation. This adjustment is needed because a population tends to have a slightly larger spread than a sample. There is a greater probability of outliers in the population data.

The Coefficient of Variation is calculated by dividing the standard deviation (usually the sample standard deviation) by the mean.

=STDEV(data)/AVERAGE(data)

Note that the CV can be expressed as a percentage: Group 2 has a CV of 52% while group 3 has a CV of 69%. A deviation of 3.46 is large for a mean of 5 (3.46/5 = 69%) but would be small if the mean were 50 (3.46/50 = 7%). So the CV can tell us how important the standard deviation is relative to the mean.

As an approximation, the standard deviation for data that has a symmetrical, heap-like distribution is roughly one-quarter of the range. If given only minimum and maximum values for data, this rule of thumb can be used to estimate the standard deviation.

At least 75% of the data will be within two standard deviations of the mean, regardless of the shape of the distribution of the data.

At least 89% of the data will be within three standard deviations of the mean, regardless of the shape of the distribution of the data.

If the shape of the distribution of the data is a symmetrical heap, then as much as 95% of the data will be within two standard deviations of the mean.

Data beyond two standard deviations away from the mean is considered "unusual" data.

Levels of measurement and appropriate measures

| Level of measurement | Appropriate measure of middle | Appropriate measure of spread |

|---|---|---|

| nominal | mode | none or number of categories |

| ordinal | median | range |

| interval | median or mean | range or standard deviation |

| ratio | mean | standard deviation |

At the interval level of measurement either the median or mean may be more appropriate depending on the specific system being studied. If the median is more appropriate, then the range should be quoted as a measure of the spread of the data. If the mean is more appropriate, then the standard deviation should be used as a measure of the spread of the data.

Another way to understand the levels at which a particular type of measurement can be made is shown in the following table.

Levels at which a particular statistic or parameter has meaning

| Level of measurement | ||||

|---|---|---|---|---|

|

Statistic/ Parameter |

Nominal | Ordinal | Interval | Ratio |

| sample size | ||||

| mode | ||||

| minimum | ||||

| maximum | ||||

| range | ||||

| median | ||||

| mean | ||||

| standard deviation | ||||

| coefficient of variation | ||||

For example, a mode, median, and mean can be calculated for ratio level measures. Of those, the mean is usually considered the best measure of the middle for a random sample of ratio level data.

When there are a countable number of values that result from observations, we say the variable producing the results is discrete. The nominal and ordinal levels of measurement almost always measure a discrete variable.

The following examples are typical values for discrete variables:

The last example above is a typical result of a type of survey called a Likert survey developed by Renis Likert in 1932.

When reporting the "middle value" for a discrete distribution at the ordinal level it is usually more appropriate to report the median. For further reading on the matter of using mean values with discrete distributions refer to the pages by Nora Mogey and by the Canadian Psychiatric Association.

Note that if the variable measures only the nominal level of measurement, then only the mode is likely to have any statistical "meaning", the nominal level of measurement has no "middle" per se.

There may be rare instances in which looking at the mean value and standard deviation is useful for looking at comparative performance, but it is not a recommended practice to use the mean and standard deviation on a discrete distribution. The Canadian Psychiatric Association discusses when one may be able to "break" the rules and calculate a mean on a discrete distribution. Even then, bear in mind that ratios between means have no "meaning!"

For example, questionnaire's often generate discrete results:

| Never 0 | About once a month 1 | About once a week 2 |

A few times a week 3 | Every day 4 | |

|---|---|---|---|---|---|

| How often do you drink caffeinated drinks such as coffee, tea, or cola? | |||||

| How often do you chew tobacco without betelnut? | |||||

| How often do you chew betelnut without tobacco? | |||||

| How often do you chew betelnut with tobacco? | |||||

| How often do you drink sakau en Pohnpei? | |||||

| How often do you drink beer? | |||||

| How often do you drink wine? | |||||

| How often do you drink hard liquor (whisky, rum, vodka, tequila, etc.)? |

|||||

| How often do you smoke cigarettes? | |||||

| How often do you smoke marijuana? | |||||

| How often do you use controlled substances other than marijuana? (methamphetamines, cocaine, crack, ice, shabu, etc.)? |

The results of such a questionnaire are numeric values from 0 to 4. For an example of a real student alcohol questionnaire, see: http://www.indiana.edu/~engs/saq.html

When there is a infinite (or uncountable) number of values that may result from observations, we say that the variable is continuous. Physical measurements such as height, weight, speed, and mass, are considered continuous measurements. Bear in mind that our measurement device might be accurate to only a certain number of decimal places. The variable is continuous because better measuring devices should produce more accurate results.

The following examples are continuous variables:

When reporting the "middle value" for a continuous distribution it is appropriate to report the mean and standard deviation. The mean and standard deviation only have "meaning" for the ratio level of measurement.

| Level of measurement | Typical variable type | Appropriate measure of middle | Appropriate measure of variation |

|---|---|---|---|

| nominal | discrete | mode | none |

| ordinal | discrete | median (can also report mode) | range |

| ratio | continuous | mean (can also report median and mode) | sample standard deviation |

Z-scores are a useful way to combine scores from data that has different means and standard deviations. Z-scores are an application of the above measures of center and spread.

Remember that the mean is the result of adding all of the values in the data set and then dividing by the number of values in the data set. The word mean and average are used interchangeably in statistics.

Recall also that the standard deviation can be thought of as a mathematical calculation of the average distance of the data from the mean of the data. Note that although I use the words average and mean, the sentence could also be written "the mean distance of the data from the mean of the data."

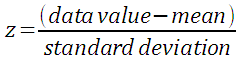

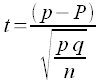

Z-scores simply indicate how many standard deviations away from the mean is a particular score. This is termed "relative standing" as it is a measure of where in the data the score is relative to the mean and "standardized" by the standard deviation. The formula for z is:

If the population mean µ and population standard deviation σ are known, then the formula for the z-score for a data value x is:

Using the sample mean x and sample standard deviation sx, the formula for a data value x is:

Note the parentheses!

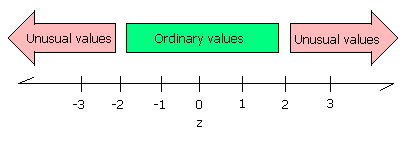

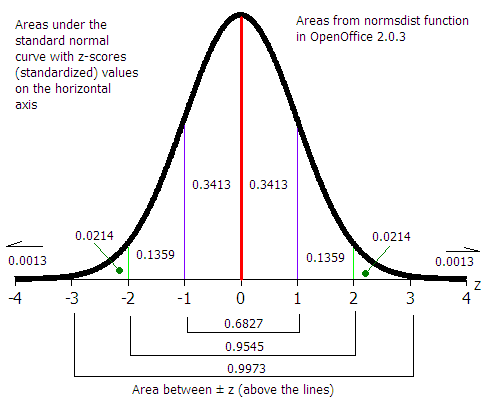

Data that is two standard deviations below the mean will have a z-score of −2, data that is two standard deviations above the mean will have a z-score of +2. Data beyond two standard deviations away from the mean will have z-scores below −2 or above 2. A data value that with a z-score below −2 or above +2 is considered an unusual value, an extraordinary data value. These values may also be outliers on a box plot depending on the distribution. Box plot outliers and extraordinary z-scores are two ways to characterize unusually extreme data values. There is no simple relationship between box plot outliers and extraordinary z-scores.

Suppose a test has a mean score of 10 and a standard deviation of 2 with a total possible of 20. Suppose a second test has the same mean of 10 and total possible of 20 but a standard deviation of 8.

On the first test a score of 18 would be rare, an unusual score. On the first test 89% of the students would have scored between 6 and 16 (three standard deviations below the mean and three standard deviations above the mean.

On the second test a score of 18 would only be one standard deviation above the mean. This would not be unusual, the second test had more spread.

Adding two scores of 18 and saying the student had a score of 36 out of 40 devalues what is a phenomenal performance on the first test.

Converting to z-scores, the relative strength of the performance on test one is valued more strongly. The z-score on test one would be (18-10)/2 = 4, while on test two the z-score would be (18-10)/8 = 1. The unusually outstanding performance on test one is now reflected in the sum of the z-scores where the first test contributes a sum of 4 and the second test contributes a sum of 1.

When values are converted to z-scores, the mean of the z-scores is zero. A student who scored a 10 on either of the tests above would have a z-score of 0. In the world of z-scores, a zero is average!

Z-scores also adjust for different means due to differing total possible points on different tests.

Consider again the first test that had a mean score of 10 and a standard deviation of 2 with a total possible of 20. Now consider a third test with a mean of 100 and standard deviation of 40 with a total possible of 200. On this third test a score of 140 would be high, but not unusually high.

Adding the scores and saying the student had a score of 158 out of 220 again devalues what is a phenomenal performance on test one. The score on test one is dwarfed by the total possible on test three. Put another way, the 18 points of test one are contributing only 11% of the 158 score. The other 89% is the test three score. We are giving an eight-fold greater weight to test three. The z-scores of 4 and 1 would add to five. This gives equal weight to each test and the resulting sum of the z-scores reflects the strong performance on test one with an equal weight to the ordinary performance on test three.

Z-scores only provide the relative standing. If a test is given again and all students who take the test do better the second time, then the mean rises and like a tide "lifts all the boats equally." Thus an individual student might do better, but because the mean rose, their z-score could remain the same. This is also the downside to using z-scores to compare performances between tests - changes in "sea level" are obscured. One would have to know the mean and standard deviation and whether they changed to properly interpret a z-score.

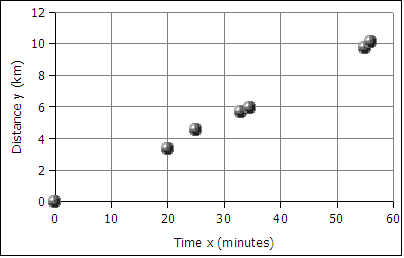

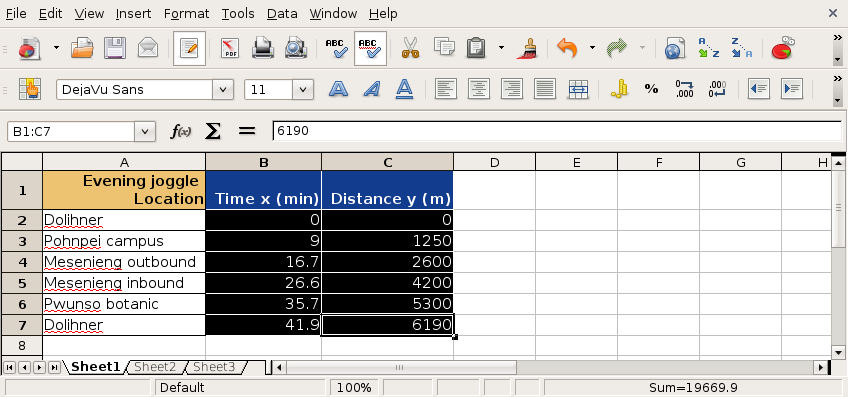

A runner runs from the College of Micronesia-FSM National campus to PICS via the powerplant/Nahnpohnmal back road. The runner tracks his time and distance.

| Location | Time x (minutes) | Distance y (km) |

|---|---|---|

| College | 0 | 0 |

| Dolon Pass | 20 | 3.3 |

| Turn-off for Nahnpohnmal | 25 | 4.5 |

| Bottom of the beast | 33 | 5.7 |

| Top of the beast | 34.5 | 5.9 |

| Track West | 55 | 9.7 |

| PICS | 56 | 10.1 |

Is there a relationship between the time and the distance? If there is a relationship,

then data will fall in a patterned fashion on an xy graph. If there is no relationship,

then there will be no shape to the pattern of the data on a graph.

If the relationship is linear, then the data will fall roughly along a line. Plotting the

above data yields the following graph:

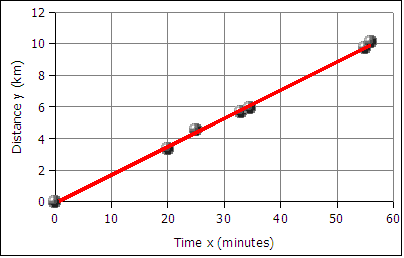

The data falls roughly along a line, the relationship appears to linear. If we can find the equation of a line through the data, then we can use the equation to predict how long it will take the runner to cover distances not included in the table above, such as five kilometers. In the next image a best fit line has been added to the graph.

The best fit line is also called the least squares line because the mathematical process for determining the line minimizes the square of the vertical displacement of the data points from the line. The process of determining the best fit line is also known and performing a linear regression. Sometimes the line is referred to as a linear regression.

The graph of time versus distance for a runner is a line because a runner runs at the same pace kilometer after kilometer.

A spreadsheet is used to find the slope and the y-intercept of the best fit line through the data.

To get the slope m use the function:

=SLOPE(y-values,x-values)

Note that the y-values are entered first, the x-values are entered second. This is the reverse of traditional algebraic order where coordinate pairs are listed in the order (x, y). The x and y-values are usually arranged in columns. The column containing the x data is usually to the left of the column containing the y-values. An example where the data is in the first two columns from row two to forty-two can be seen below.

=SLOPE(B2:B42,A2:A42)

The intercept is usually the starting value for a function. Often this is the y data value at time zero, or distance zero.

To get the intercept:

=INTERCEPT(y-values,x-values)

Note that intercept also reverses the order of the x and y values!

For the runner data above the equation is:

distance = slope * time + y-intercept

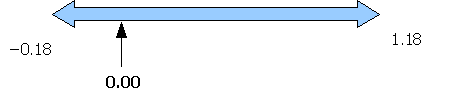

distance = 0.18 * time + − 0.13

y = 0.18 * x + − 0.13

or

y = 0.18x − 0.13

where x is the time and y is the distance

In algebra the equation of a line is written as y = m*x + b where m is the slope and b is the intercept. In statistics the equation of a line is written as y = a + b*x where a is the intercept (the starting value) and b is the slope. The two fields have their own traditions, and the letters used for slope and intercept are a tradition that differs between the field of mathematics and the field of statistics.

Using the y = mx + b equation we make predictions about how far the runner will travel given a time, or how long the runner will runner given a distance. For example, according the equation above, a 45 minute run will result in the runner covering 0.18*45 - 0.13 = 7.97 kilometers. Using the inverse of the equation we can predict that the runner can run a five kilometer distance in 28.5 minutes (28 minutes and 30 seconds).

Given any time, we can calculate the distance. Given any distance, can solve for the time.

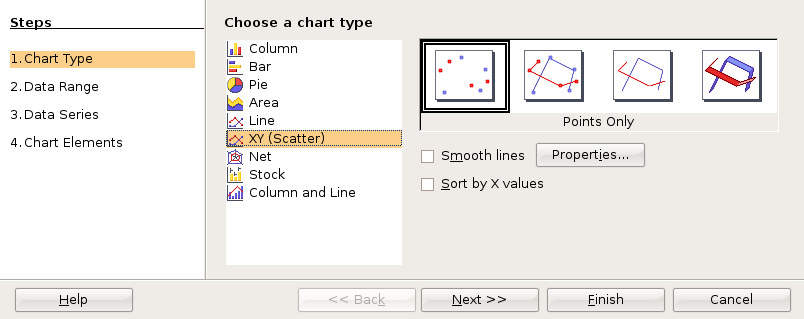

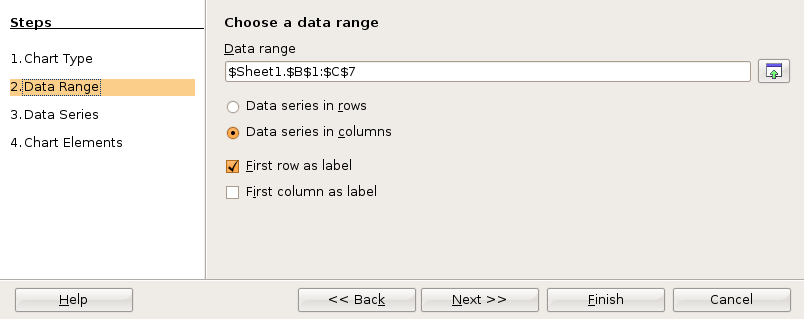

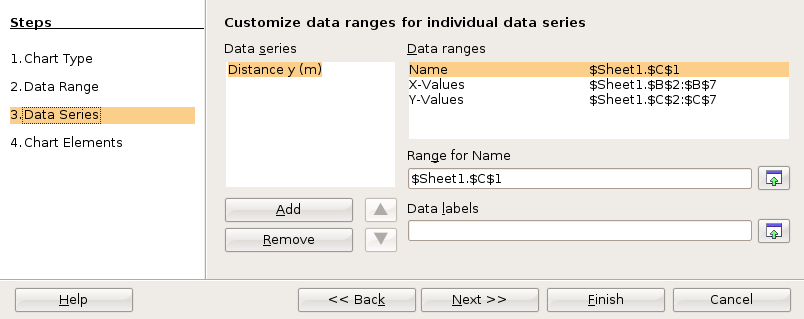

Making an xy scattergraph using LibreOffice.org

First select the data to be graphed.

Then click on the chart wizard button

![]() or

or

![]() in the toolbar to start the chart wizard.

in the toolbar to start the chart wizard.

Choose an XY (Scatter) graph in the first dialog box.

For statistics class, click through the next two dialog boxes.

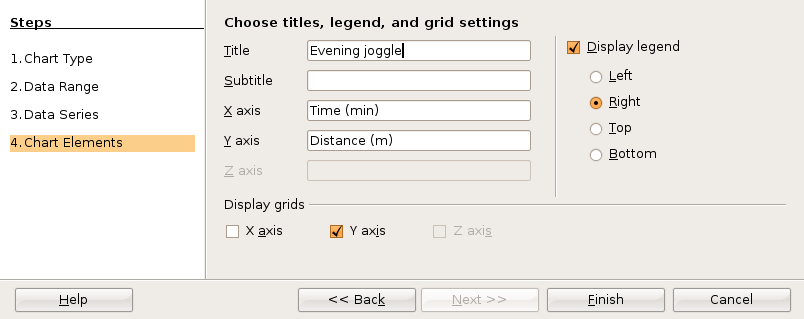

In the fourth and final dialog box you can set up the x and y axis labels as well as a chart title. Then click on Finish.

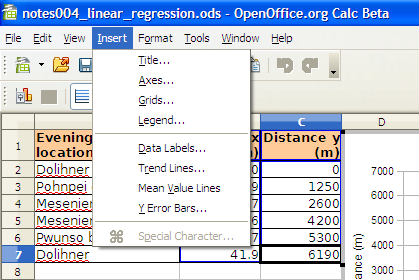

Before clicking anywhere else, choose Insert: Trend Lines from the menu.

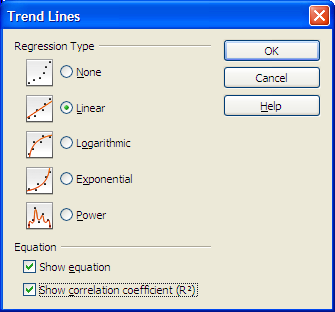

The Trend Lines dialog box permits selection of linear and non-linear regression lines. For a straight line regression, choose the linear regression type. Click on the check boxes for the function and the R2. You may have to click twice to obtain the check mark.

Click on OK to close the dialog box.

All versions of OpenOffice.org Calc can calculate the slope and intercept using spreadsheet functions.

After plotting the x and y data, the xy scattergraph helps determine the nature of the relationship between the x values and the y values. If the points lie along a straight line, then the relationship is linear. If the points form a smooth curve, then the relationship is non-linear (not a line). If the points form no pattern then the relationship is random.

Relationships between two sets of data can be positive: the larger x gets, the larger y

gets.

Relationships between two sets of data can be negative: the larger x gets, the smaller y

gets.

Relationships between two sets of data can be non-linear

Relationships between two sets of data can be random: no relationship exists!

For the runner data above, the relationship is a positive relationship. The points line along a line, therefore the relationship is linear.

An example of a negative relationship would be the number of beers consumed by a student and a measure of the physical coordination. The more beers consumed the less their coordination!

For a linear relationship, the closer to a straight line the points fall, the stronger the relationship. The measurement that describes how closely to a line are the points is called the correlation.

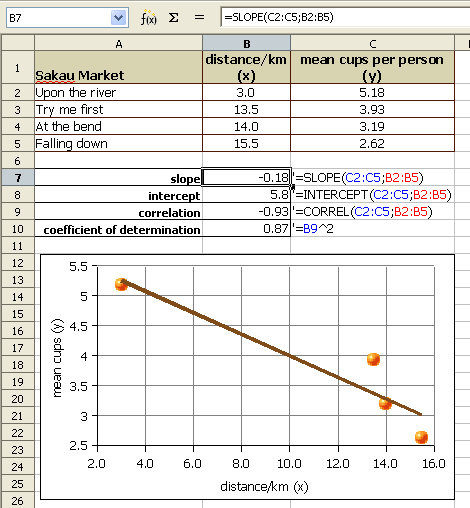

The following example explores the correlation between the distance of a business from a city center versus the amount of product sold per person. In this case the business are places that serve pounded Piper methysticum plant roots, known elsewhere as kava but known locally as sakau. This business is unique in that customers self-limit their purchases, buying only as many cups of sakau as necessary to get the warm, sleepy, feeling that the drink induces. The businesses are locally referred to as sakau markets. The local theory is that the further one travels from the main town (and thus deeper into the countryside of Pohnpei) the stronger the sakau that is served. If this is the case, then the mean number of cups should fall with distance from the main town on the island.

The following table uses actual data collected from these businesses, the names of the businesses have been changed.

| Sakau Market | distance/km (x) | mean cups per person (y) |

|---|---|---|

| Upon the river | 3.0 | 5.18 |

| Try me first | 13.5 | 3.93 |

| At the bend | 14.0 | 3.19 |

| Falling down | 15.5 | 2.62 |

The first question a statistician would ask is whether there is a relationship between the distance and mean cup data. Determining whether there is a relationship is best seen in an xy scattergraph of the data.

If we plot the points on an xy graph using a spreadsheet, the y-values can be seen to fall with increasing x-value. The data points, while not all exactly on one line, are not far away from the best fit line. The best fit line indicates a negative relationship. The larger the distance, the smaller the mean number of cups consumed.

We use a number called the Pearson product-moment correlation coefficient r to tell us how well the data fits to a straight line. The full name is long, in statistics this number is called simply r. R can be calculated using a spreadsheet function.

The function for calculating r is:

=CORREL(y-values,x-values)

Note that the order does not technically matter. The correlation of x to y is the same as that of y to x. For consistency the y-data,x-data order is retained above.

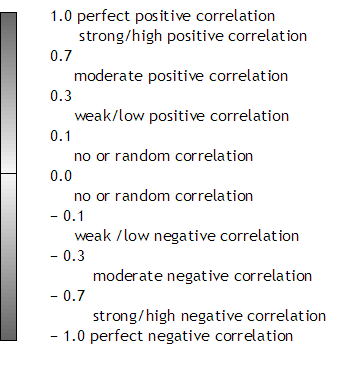

The Pearson product-moment correlation coefficient r (or just correlation r) values that result from the formula are always between -1 and 1. One is perfect positive linear correlation. Negative one is perfect negative linear correlation. If the correlation is zero or close to zero: no linear relationship between the variables.

A guideline to r values:

Note that perfect has to be perfect: 0.99999 is very close, but not perfect. In real world systems perfect correlation, positive or negative, is rarely or never seen. A correlation of 0.0000 is also rare. Systems that are purely random are also rarely seen in the real world.

Spreadsheets usually round to two decimals when displaying decimal numbers. A correlation r of 0.999 is displayed as "1" by spreadsheets. Use the the Format menu to select the cells item. In the cells dialog box, click on the numbers tab to increase the number of decimal places. When the correlation is not perfect, adjust the decimal display and write out all the decimals.

The correlation r of − 0.93 is a strong negative correlation. The relationship is strong and the relationship is negative. The equation of the best fit line, y = −0.18x + 5.8 where y is the mean number of cups and x is the distance from the main town. The equations that generated the slope, y-intercept, and correlation can be seen in the earlier image.

The strong relationship means that the equation can be used to predict mean cup values, at least for distances between 3.0 and 15.5 kilometers from town.

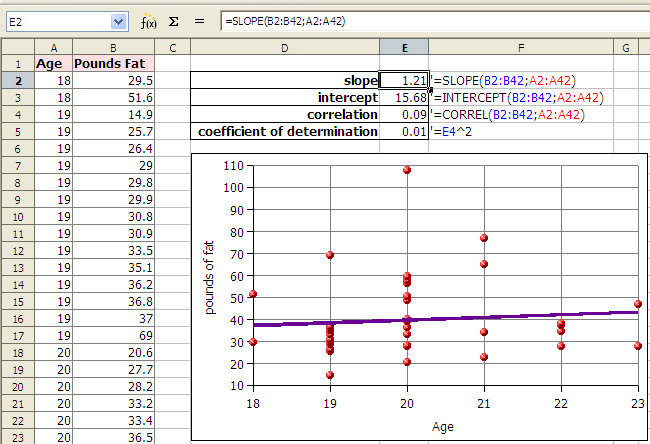

A second example explores the correlation between female students pounds of fat in a statistics course. The table provides the x and y data.

The table below continues beyond the boundaries of the page.

| Age/years (x) | 18 | 18 | 19 | 19 | 19 | 19 | 19 | 19 | 19 | 19 | 19 | 19 | 19 | 19 | 19 | 19 | 20 | 20 | 20 | 20 | 20 | 20 | 20 | 20 | 20 | 20 | 20 | 20 | 20 | 20 | 20 | 21 | 21 | 21 | 21 | 22 | 22 | 22 | 22 | 23 | 23 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| Fat/pounds (y) | 29.5 | 51.6 | 14.9 | 25.7 | 26.4 | 29.0 | 29.8 | 29.9 | 30.8 | 30.9 | 33.5 | 35.1 | 36.2 | 36.8 | 37.0 | 69.0 | 20.6 | 27.7 | 28.2 | 33.2 | 33.4 | 36.5 | 39.3 | 39.4 | 40.2 | 48.9 | 50.4 | 56.7 | 57.8 | 59.7 | 107.8 | 22.7 | 34.2 | 65.0 | 76.9 | 28.1 | 34.8 | 37.2 | 38.3 | 28.0 | 46.8 |

The first question a statistician would ask is whether there is a relationship seen in the xy scattergraph between the age of a female student at COMFSM and the pounds of fat? Can we use our data to predict a pounds of body fat based on age alone?

If we plot the points on an xy graph using a spreadsheet, the data does not appear to be strongly linear. The data appears to be scattered randomly about the graph. Although a spreadsheet is able to give us a best fit line (a linear regression or least squares line), we will later have to consider whether the relationship is strong enough to make the equation useful.

In the example above the correlation r is 0.09. Zero would be random correlation. This value is so close to zero that the correlation is effectively random. The relationship is random. There is no relationship. The linear equation y = 1.21x + 15.68, where y is the pounds of fat and x is the age, cannot be used to predict the pounds of fat given the age.

We cannot usually predict values that are below the minimum x or above the maximum x values and make meaningful predictions. In the example of the runner, we could calculate how far the runner would run in 72 hours (three days and three nights) but it is unlikely the runner could run continuously for that length of time. For some systems values can be predicted below the minimum x or above the maximum x value. When we do this it is called extrapolation. Very few systems can be extrapolated, but some systems remain linear for values near to the provided x values.

The coefficient of determination, r², is a measure of how much of the variation in the independent x variable explains the variation in the dependent y variable. This does NOT imply causation. In spreadsheets the ^ symbol (shift-6) is exponentiation. In OpenOffice Calc we can square the correlation with the following formula:

=(CORREL(y-values,x-values))^2

The result, which is between 0 and 1 inclusive, is often expressed as a percentage.

Imagine a Yamaha outboard motor fishing boat sitting out beyond the reef in an open ocean swell. The swell moves the boat gently up and down. Now suppose there is a small boy moving around in the boat. The boat is rocked and swayed by the boy. The total motion of the boat is in part due to the swell and in part due to the boy. Maybe the swell accounts for 70% of the boat's motion while the boy accounts for 30% of the motion. A model of the boat's motion that took into account only the motion of the ocean would generate a coefficient of determination of about 70%.

Finding that a correlation exists does not mean that the x-values cause the y-values. A line does not imply causation: Your age does not cause your pounds of body fat, nor does time cause distance for the runner.

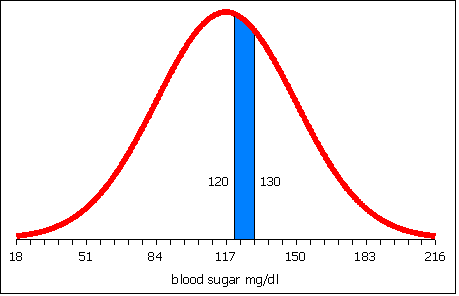

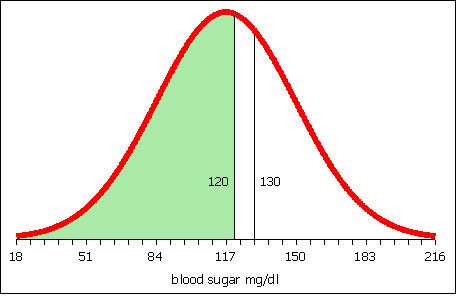

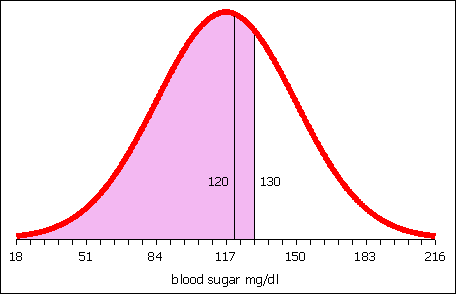

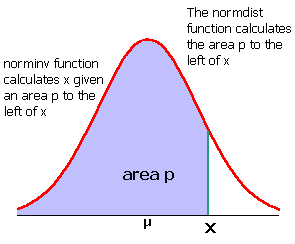

Studies in the mid 1800s of Micronesia would have shown of increase each year in church attendance and sexually transmitted diseases (STDs). That does NOT mean churches cause STDs! What the data is revealing is a common variable underlying our data: foreigners brought both STDs and churches. Any correlation is simply the result of the common impact of the increasing influence of foreigners.